SEOUL, March 17 (AJP) - The world’s most consequential semiconductor rivalry is increasingly being fought not in fabs but on the stage of artificial intelligence.

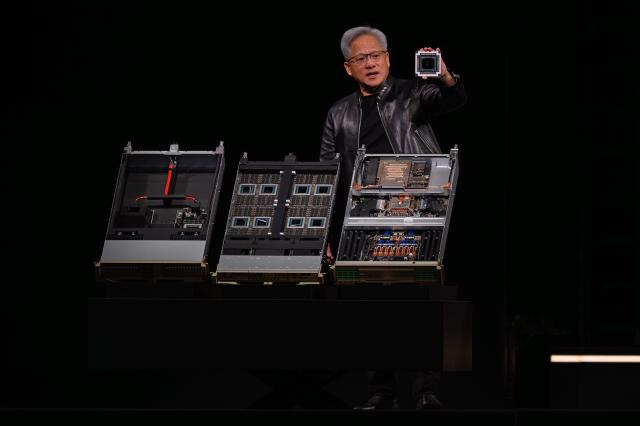

At this year’s NVIDIA GPU Technology Conference in San Jose, the annual gathering hosted by Jensen Huang drew the usual global crowd eager to hear where AI infrastructure is headed next.

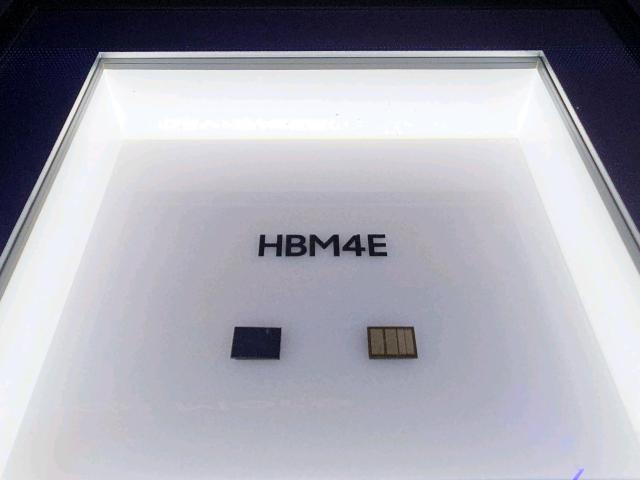

But behind the keynote spectacle, another story unfolded: South Korea’s memory giants Samsung Electronics and SK hynix quietly squared off in what is becoming the semiconductor industry’s most decisive battleground — HBM4, the next generation of high-bandwidth memory powering AI accelerators.

The rivalry sharpened after Huang unveiled NVIDIA’s next-generation Vera Rubin AI platform, alongside the Groq 3 Language Processing Unit, a specialized inference processor manufactured by Samsung’s foundry division.

The message from the stage was unmistakable. AI computing demand is entering a new phase — and the companies that supply memory will determine who captures the value.

“We are heading toward a world where AI infrastructure becomes a trillion-dollar industry,” Huang told the audience, projecting at least $1 trillion in revenue by 2027 as demand for accelerated computing explodes.

For Samsung, the event served as a strategic reset.

The world’s largest memory maker has spent much of the past two years trying to regain ground in the high-bandwidth memory segment after falling behind SK hynix in NVIDIA’s supply chain.

At GTC, Samsung came armed with a clear message: it intends to retake the technological lead.

The company showcased its sixth-generation HBM4, now entering mass production, and publicly introduced its successor HBM4E, signaling an aggressive roadmap aimed squarely at next-generation AI accelerators.

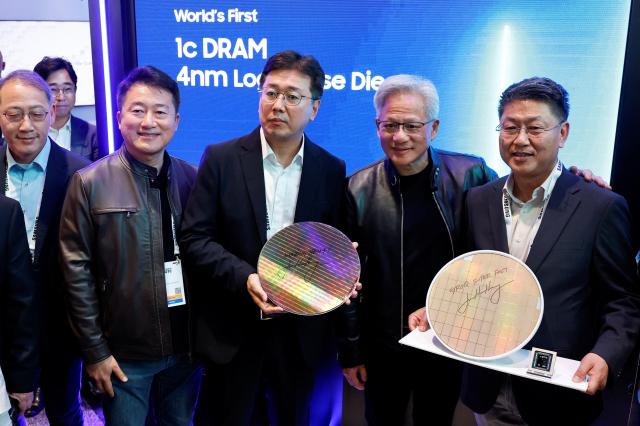

Samsung’s pitch leaned heavily on a structural advantage it believes competitors cannot easily replicate — its position as the industry’s only fully integrated device manufacturer (IDM) capable of delivering a complete AI chip stack.

The company highlighted a “total solution” approach combining:1c-nanometer DRAM memory dies, 4-nanometer foundry logic dies, and advanced 2.5D and 3D packaging technologies.

By controlling memory, logic fabrication and packaging within one ecosystem, Samsung argues it can shorten design cycles and accelerate deployment for hyperscale AI customers.

That strategy gained visibility during Huang’s keynote when he confirmed that the Groq 3 LPU, optimized for ultra-fast AI inference, will begin shipping in the second half of the year.

“I want to say thank you to Samsung,” Huang said from the stage. “They are cranking as hard as they can.”

The remark underscored Samsung’s role in scaling production for the next generation of AI silicon.

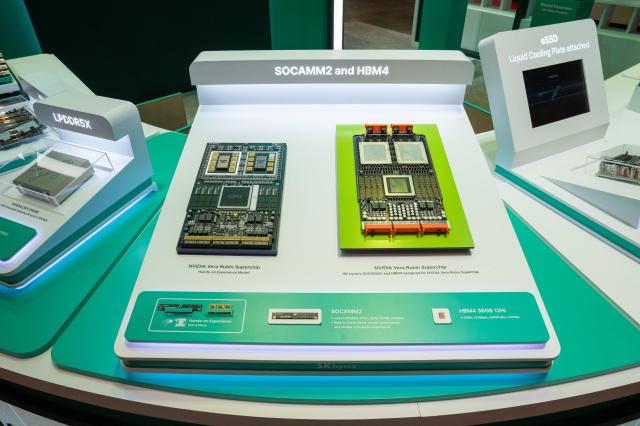

But SK hynix – the dominant NVIDIA partner for past and current generation chips – isn't ready to give up its dominance.

Under the theme “Spotlight on AI Memory,” the company emphasized its established position within NVIDIA’s ecosystem — a relationship built over several product cycles.

SK hynix highlighted its HBM3E and upcoming HBM4 solutions already integrated into the NVIDIA DGX Spark AI supercomputer, positioning its products as the industry benchmark for reliability and mass production.

The company’s presence was also notable for the level of leadership attending the event.

Chey Tae-won, chairman of SK Group, appeared alongside senior executives to reinforce what insiders often call the “triangular alliance” linking SK hynix, NVIDIA and TSMC.

That partnership model contrasts sharply with Samsung’s vertically integrated strategy.

Where Samsung emphasizes end-to-end control of semiconductor manufacturing, SK hynix is doubling down on specialized collaboration, relying on deep engineering integration with NVIDIA and advanced logic fabrication from TSMC.

The approach has paid off so far.

SK hynix remains NVIDIA’s primary supplier of HBM used in its most powerful AI accelerators currently deployed across hyperscale data centers.

The confrontation at GTC reflects a deeper shift underway in the semiconductor industry.

For decades, memory companies competed largely on manufacturing scale and cost efficiency. In the AI era, the competition is increasingly about system architecture.

HBM — stacks of vertically integrated DRAM connected through ultra-wide interfaces — has become the critical bottleneck for AI performance. The memory must deliver enormous bandwidth while staying tightly coupled to GPUs and custom accelerators.

That shift is forcing memory makers to operate less like commodity suppliers and more like system engineering partners.

Samsung is betting that its turn-key semiconductor ecosystem will allow it to integrate memory, logic and packaging into unified AI modules.

SK hynix is betting that deep specialization and ecosystem partnerships will preserve its lead.

The stakes could hardly be higher.

During his keynote, Huang described the scale of change in stark terms.

Computing demand, he said, has grown one million-fold over the past two years as generative AI moves from experimentation to real economic work.

“AI has finally become able to do productive work,” Huang said.

Investors quickly picked up on the implications.

Shares of Samsung Electronics rose sharply following the GTC announcements. As of 10:05 a.m. KST, the stock was trading at 195,700 won, up 3.71 percent from the previous session.

SK hynix also gained ground, climbing to 994,000 won, up 2.05 percent, reflecting broad optimism about the expanding role of Korean memory suppliers in the global AI semiconductor supply chain.

Analysts say the next two years will likely determine the long-term hierarchy in the HBM market.

With NVIDIA preparing the Vera Rubin generation of AI systems and hyperscale data centers expanding at unprecedented speed, demand for high-bandwidth memory is expected to surge.

Some projections suggest Samsung’s HBM revenue alone could more than triple by 2026 if the company successfully ramps production.

But SK hynix is unlikely to relinquish its lead without a fight.

At GTC, the message from both companies was clear.

The AI boom has created a semiconductor arms race — and the decisive battle may be fought not over GPUs, but over the memory stacked beside them.

Copyright ⓒ Aju Press All rights reserved.